- Splunk Answers

- :

- Splunk Administration

- :

- Getting Data In

- :

- Why does /opt/splunk/var/run/searchpeers fill up?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark Topic

- Subscribe to Topic

- Mute Topic

- Printer Friendly Page

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

On two indexers /opt/splunk/var/run/searchpeers is at 20 GBs of files with delta files and bundle file. Is it safe to delete them?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You may be hitting a known bug which can cause this issue. I would consider upgrading to one of the versions listed below and see if that resolves the issue.

BUG: SPL-140831 "Splunk not cleaning up $SPLUNK_HOME/var/run/searchpeers of .delta files and matching directories whose only non-empty subdirectory has the .index extension"

Fixed in: 6.5.6+, 6.6.3+, 7.0.0+

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

version 8.0.5

Could someone indicate what they are for or what they do and if they can be deleted or not?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am seeing it in 7.3.1

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am facing the same issue in 7.0.2.

I have been doing the following things but this only seems to be temporary solution for a few days.

1. Stop splunkd and delete the bundles from searchpeer directory.

2. Data rebalance from Cluster Master.

Kindly advise, if anything else can be done.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Same. Having this problem and I'm on 7.1 so I'm going to open a ticket with support.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We're also seeing this issue in Splunk 7.2.1. IDX Cluster size = 41 Nodes with $SPLUNK_HOME FS size of 40GB. the Search Peers dir keeps getting up to 25-30GB which triggers the dispatch dir warning about there being less than X amount of size left on the disk. Frustrating.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You may be hitting a known bug which can cause this issue. I would consider upgrading to one of the versions listed below and see if that resolves the issue.

BUG: SPL-140831 "Splunk not cleaning up $SPLUNK_HOME/var/run/searchpeers of .delta files and matching directories whose only non-empty subdirectory has the .index extension"

Fixed in: 6.5.6+, 6.6.3+, 7.0.0+

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Much appreciated - we'll upgrade tonight.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

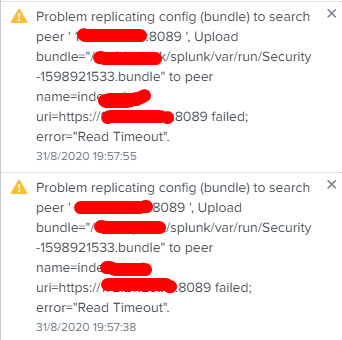

Could you please elaborate on this topic, even I have the same issue storing a lot of bundle and delta files in searchpeers. And we are running 6.6.3. Is it ok to delete those files from search peers? If not what is the best way to reduce the size. Few of them shows more than 900+Mb in size. This causing this error message

"Bundle Replication: Problem replicating config (bundle) to search peer ' xxxx:8089 ', Reading reply to upload: rv=-2, Receive from=https://xxxx:8089 timed out; exceeded 60sec, as per=distsearch.conf/[replicationSettings]/sendRcvTimeout"

Thank you

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The error indicating timeout while replicating search bundles is likely unrelated to the question asked previously.

In your case a larger bundle size may just be taking longer than Splunk expects to replicate out to the search peers. This may have been recently triggered by the search bundle growing due to a new app or lookup, or even just network slowness.

The bundles themselves are just tar files. You can copy one to a temporary directory and untar it to see which, if any, knowledge objects are unnecessarily large. Lookup files are a likely culprit.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

searchpeers folder filling up /opt/splunk..what could be the cause??

speaks about it. Is it safe to delete them?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You can. The search heads will resend latest knowledge bundles again. For query performance/impact to users, I would say do it during a maintenance period/off-office hours.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Perfect. Thank you.

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

what version of Splunk are you running?

- Mark as New

- Bookmark Message

- Subscribe to Message

- Mute Message

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

We just upgraded last night from 6.5.2. to 6.6.2. I wonder whether the upgrade has anything to do with this behavior ..